Friday May 1st, 2026

This week, photo editing in iOS 27 could be very exciting, Vision Pro could be quietly heading for the exit, and could Apple seriously be consider ditching MagSafe?

Photo Editing could finally be good in iOS 27...

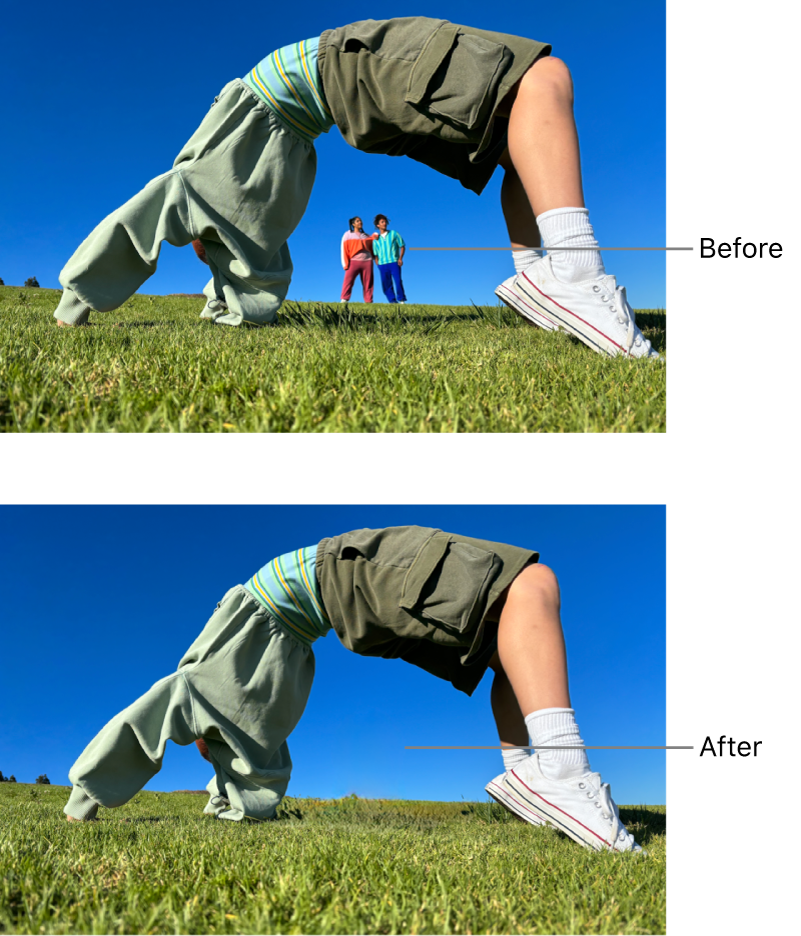

Bloomberg's Mark Gurman reported this week that iOS 27 will bring a significant upgrade to the Photos app, with a new "Apple Intelligence Tools" menu featuring three core AI-powered editing features: Extend, Enhance, and Reframe. Extend will use generative AI to expand an image beyond its original borders, essentially filling in what's outside the frame. Enhance will automatically improve lighting, colour, and overall image quality in a single tap. And Reframe will let you adjust the composition of a photo after you've taken it, shifting the subject within the frame without cropping. The existing Clean Up tool, which lets you remove unwanted objects from photos, will reportedly be folded into the same suite rather than sitting separately. All of this is expected to run on device, with processing completed in seconds.

It sounds promising on paper. But I'll be honest, I'm going to need to see it working before I get excited, because Apple's track record with AI photo editing so far has been underwhelming at best.

Since Apple Intelligence launched, the only feature I've found even semi-useful has been Clean Up, the object removal tool. And "semi-useful" is doing a lot of heavy lifting in that sentence. It works well enough if you want to remove, say, a dog toy sitting on a lawn where the background is simple and repetitive. But try to remove anything more complex, a person, a shadow, an object near an edge, and the results fall apart quickly. It's fine for very basic touch-ups, but it's nowhere close to what Google and Samsung have been shipping on their phones for the past couple of years. Google's Magic Eraser and Samsung's generative editing tools are genuinely impressive and have been for a while. And that's before you even consider what you can now do with the likes of Claude, Gemini, and ChatGPT, which can perform complex image edits from a simple text prompt that would have felt like science fiction three years ago.

The gap is real, and Apple knows it. The fact that multiple reports have explicitly described these new tools as Apple's attempt to "take on Samsung Galaxy AI features" tells you everything about where Apple sees itself right now: playing catch-up.

The opportunity here is enormous, though. Photo editing is one of those areas where AI can genuinely make a difference to ordinary people's lives, not in a flashy, headline-grabbing way, but in a practical, everyday way. Most people aren't professional photographers. They take photos of their kids, their holidays, their meals, their pets. They want those photos to look good, and they want fixing a bad crop or a cluttered background to be easy. If Apple can deliver tools that make that effortless, right there in the Photos app without needing a third-party editor, that's a huge win.

But here's the concern. According to Gadget Hacks, who dug into the reliability of the reporting, Enhance and the improved Clean Up look relatively straightforward to ship. They're covering ground that other AI tools have handled reliably for years. The two more ambitious features, Extend and Reframe, are reportedly still struggling in testing. These are the ones that would actually differentiate Apple from the competition, and they're the ones that might not be ready by launch. If Apple takes the stage at WWDC on 8 June and demos Extend and Reframe with confidence, that's a very good sign. If those features get announced with "available later this year" language, that's the same pattern we've seen with every delayed Apple Intelligence feature, and people are starting to notice.

This matters because patience is running thin. Apple Intelligence was announced two years ago, and the overwhelming sentiment from most users is still "what does it actually do?" The writing tools are underwhelming. Genmoji is a gimmick. The notification summaries were so unreliable they had to be pulled back. Clean Up is limited. Siri is still Siri. If iOS 27 launches with another round of AI features that feel half-baked or arrive months late, I think a lot of people are going to start asking serious questions about whether Apple Intelligence is ever going to deliver on its original promise.

Photo editing is the perfect area for Apple to prove the doubters wrong. It's visual, it's tangible, and it's something people will actually use every day. Get it right, and it could be the moment Apple Intelligence finally starts to feel real. Get it wrong, and it's another bullet point on a growing list of missed opportunities.

WWDC is five weeks away. No pressure.

You watch the videos? But how much do you remember?

If you're anything like most of my audience, you've probably watched dozens of iPhone tips videos over the years. Maybe even hundreds. You've bookmarked a few, saved some to Watch Later, maybe even scribbled a note or two. But when you actually need that tip, when you're standing there trying to remember how to do that thing you definitely saw in a video once, it's gone. You can't remember which video it was in, what it was called, or whether it was even on my channel or someone else's.

That's not a you problem. That's a content problem. YouTube is brilliant for discovery, but it's terrible for reference. Tips get buried in ten-minute videos, mixed in with ads and sponsor reads, and once you've scrolled past them, they're effectively lost. You'd have to rewatch the entire video just to find the one thing you needed.

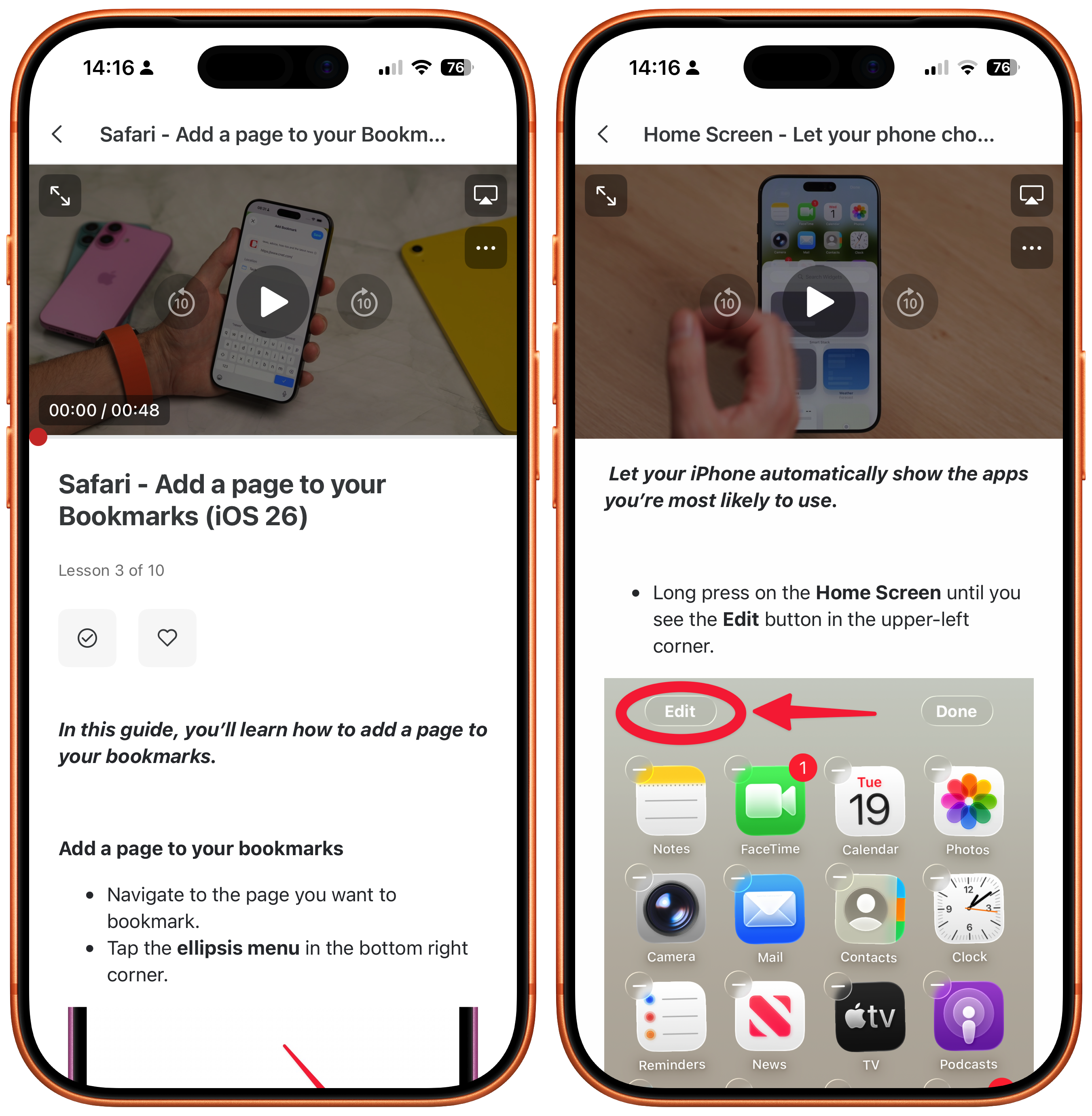

iPhone Essentials Plus was built to solve exactly this. It's not another set of videos to watch and forget. It's a structured, searchable library of over 250 lessons (and growing), each one focused on a single topic, with a video walkthrough, a written step-by-step guide, and a downloadable PDF you can keep. The tips you've half-remembered from a YouTube video, organised and accessible whenever you need it.

The course is updated regularly as Apple releases new features, so it stays current with your phone. I've also recently added ad-free, sponsor-free versions of my YouTube videos as bonus content, plus a brand new standalone course called iPhone Battery Made Easy is now included at no extra cost.

It's a one-time purchase, no subscription, with lifetime access. If you've ever thought "I know I saw a tip for this somewhere," this is the answer.

Purchase Links;

- iPhone Battery Made Easy

- iPhone Essentials Plus

- Mac Essentials Plus

- iPhone & Mac Essentials Plus Discount Bundle

Is this the end for Vision Pro?

It's looking increasingly like Apple's most ambitious product might also be its shortest-lived. MacRumors reported this week that Apple has "all but given up" on the Vision Pro, with the dedicated hardware team reportedly being redistributed to other projects, including Siri and smart glasses development. Bloomberg's Mark Gurman backed this up, confirming that Apple broke up the Vision Products Group last year, splitting it between software and hardware engineering, and has since reassigned much of the software team to Siri and the hardware team to its upcoming smart glasses.

The numbers tell a stark story. Apple has sold approximately 600,000 Vision Pro units worldwide since it launched in February 2024. For context, Apple sells that many iPhones in about a day. MacRumors also reported that the return rate for Vision Pro has been "unusually high," far exceeding any other modern Apple product. The M5 refresh that arrived last October was supposed to help. It brought a faster chip, a 120Hz refresh rate, 30 extra minutes of battery life, and the new Dual Knit Band designed to redistribute weight more comfortably across the head. But the price stayed at $3,499/£3,499, and consumers still weren't interested. The fundamental problems, the weight, the comfort, the limited app ecosystem, and the fact that most people simply don't know what to use it for, ran too deep for a spec bump to fix.

Speaking from personal experience, I own two of those 600,000 units. I bought the original M2 version at launch and upgraded to the M5 model when it came out. The M5 is better, the screen is noticeably smoother and the new band is a genuine improvement. But after two years of trying to make this thing a regular part of my life, I still reach for it maybe once or twice a month, and I almost always take it off within an hour because my face hurts. If someone who creates Apple content for a living can't find a compelling daily use case, I'm not sure who can.

There was talk for a while about a lighter, cheaper "Vision Air" that might have broadened the appeal, but that project has reportedly been scrapped in favour of the smart glasses we've discussed in previous newsletters. That feels like the right call. A pair of lightweight glasses with cameras, speakers, and Siri integration is a product that people might actually wear all day. A 1.3-pound headset that costs as much as a MacBook Pro and leaves you with a sore nose after 45 minutes is not.

That said, there is a reason to pause before writing the obituary entirely. Apple is still actively hiring for its Vision Production Group. Several job listings have appeared on Apple's careers page in recent months that specifically reference Vision Pro and visionOS. Some of those roles mention expanding the technology to iOS and macOS, which suggests Apple sees value in the underlying platform even if the hardware itself isn't selling. It's possible that Vision Pro lives on as an enterprise or developer tool rather than a consumer product, or that the technology feeds into future products we haven't seen yet. But as a mainstream consumer device? It's hard to see a path forward.

There's also a practical dimension to this that's worth flagging. Right now, Apple is dealing with a global RAM shortage that's causing serious supply chain headaches. High-end Mac mini and Mac Studio configurations have been listed as "currently unavailable" for weeks, with some models quoting delivery times of four to five months. The M5 Mac mini and M5 Mac Studio, which were widely expected to launch around WWDC in June, are now believed to have been pushed back to as late as October. The reason is simple: demand for memory from AI data centres is so intense that it's squeezing supply for everything else. DRAM prices saw their largest quarterly increase on record in Q1 2026, and Apple has already had to remove the 512GB RAM option from the Mac Studio entirely and increase the price of its 256GB upgrade by $400.

In that environment, it makes perfect sense for Apple to be selective about where it allocates its limited memory supply. iPhones sell hundreds of millions of units. MacBooks sell in the tens of millions. The Mac mini and Mac Studio are important but lower volume. And the Vision Pro? Six hundred thousand units in two years. If you're Tim Cook, or rather John Ternus come September, and you're staring at a constrained supply of components, you're going to prioritise the products that actually move the needle. Pouring resources into a headset that almost nobody is buying, while your best-selling desktops are out of stock, would be a very difficult decision to justify.

None of this means Apple will never return to spatial computing. The technology is impressive, the software team has built something genuinely novel with visionOS, and there are real use cases in enterprise, healthcare, and specialised professional work. But as a product you can walk into an Apple Store and buy for the price of a decent holiday? Vision Pro increasingly looks like it was a brilliant prototype that never found its audience. And with smart glasses on the horizon, Apple appears to have quietly accepted that the future of wearable computing sits on your nose, not over your entire face.

Could Apple REALLY ditch MagSafe?

One of the things I find myself thinking about more and more is just how many competing pressures Apple's product engineering team has to juggle every time they sit down to design the next iPhone. It's easy to look at a finished product and assume it all came together smoothly, but behind the scenes there must be dozens of decisions being made that involve genuine trade-offs, and this week we got a glimpse at one of the most interesting ones in a while.

A well-known Weibo leaker called Instant Digital, who has a solid track record with Apple rumours, posted this week that there is "a lot of internal debate" at Apple right now about whether MagSafe should remain a standard feature on iPhones. When MagSafe was first introduced with the iPhone 12 in 2020, the internal stance was reportedly very aggressive. Apple had plans to bring it to iPads as well, and the company contributed its magnetic alignment technology to the Qi2 wireless charging standard that the broader industry has since adopted. But now, according to this leaker, confidence is wavering.

No decision has apparently been made, and it's worth saying upfront that I'd be surprised if MagSafe disappeared from the main iPhone lineup any time soon. But the fact that the conversation is happening at all tells you something about the kind of engineering dilemmas Apple is navigating right now.

Here's the thing. The magnet array inside your iPhone is not small. It's a ring of powerful magnets that takes up meaningful internal space and adds weight and manufacturing cost to every single device. When you're building a phone that's a standard 8mm thick, that's manageable. But when you're trying to push into genuinely thin territory, and Apple clearly is, those magnets become a problem. The iPhone Ultra, Apple's upcoming foldable, is rumoured to be just 4.5mm thin when unfolded, and leaked dummy units show no visible MagSafe indentation at all. The iPhone Air launched last year without MagSafe initially either, before Apple restored it under pressure. There's a pattern forming here: every time Apple tries to go thin, MagSafe is one of the first things that gets squeezed.

And this is just one example of the kind of constraints Apple's engineers are dealing with. The EU has new legislation coming into force in February 2027 that will require smartphones sold in the European Union to have batteries that are "readily removable and replaceable" by the end user without specialist tools. Apple may end up being exempt, because iPhones from the 15 onwards already meet the 80% capacity after 1,000 cycles threshold that qualifies for an exemption. But even the possibility of that regulation has to factor into how Apple designs its hardware. Every millimetre inside an iPhone is spoken for, and every new constraint, whether it's a legal requirement, a wireless charging standard, or a desire to make the phone thinner, forces a trade-off somewhere else.

The MagSafe question is fascinating because, unlike the EU battery rules or the switch to USB-C, this isn't about complying with legislation. It's about Apple asking itself whether a feature it created, popularised, and built an entire ecosystem around is now getting in the way of where it wants to take the hardware next. The "Glasswing" project, which is rumoured to be a radical "single sheet of glass" iPhone redesign for 2027, would almost certainly require rethinking the internal layout from scratch. If Apple's vision for the future of the iPhone is thinner, lighter, and more minimal, MagSafe's chunky magnet ring is a legitimate obstacle.

That said, I think it's really hard to overstate how popular MagSafe has become. I use it constantly. MagSafe chargers on my desk, MagSafe mount in the car, MagSafe wallet on the back of my phone. And I know I'm far from alone. When Apple launched the iPhone 16e without MagSafe last year, it was the single biggest criticism from reviewers and customers, and Apple reversed the decision with this year's iPhone 17e. Third-party manufacturers have built entire businesses around the standard. Removing it from the core iPhone lineup would break compatibility with millions of accessories and frustrate a huge chunk of the user base.

So where does this leave us? My best guess is that MagSafe stays on the Pro and standard iPhones for the foreseeable future, but we might see it dropped from specific models where thinness is the priority, like the iPhone Ultra and potentially a future iPhone Air. Apple has form for this kind of thing. It removed the headphone jack in 2016 and the industry eventually followed. It's possible that Apple sees a future where wireless charging doesn't need magnets at all, and MagSafe becomes another feature that people look back on fondly but ultimately didn't survive the relentless pursuit of thinner hardware.

For now, though, your MagSafe charger is safe. Probably.

Tip of the Week

Did you know if you have a note in the Notes app and you'd like to export it as a PDF, you can do that really easily? Tap the Share button at the top of the screen, then tap the View More button. In here, choose Print. This turns the note into a PDF, and you can then press the share button at the top of the page and share it using any normal method.